Deployed in DoW Gemini Enterprise

and DoD Ask Sage (IL5).

Governed AI behavior through rules and disciplined human use.

A3T (AI as a Team) manages an AI system's inputs and outputs . Governance is applied at the system boundary, shaping how the system behaves in practice. The model provides generative capability, but the AI that matters is the governed system that uses it.

Also operational in commercial OpenAI (GPT), Microsoft (Copilot),

Perplexity, and

Anthropic (Claude) environments.

Measurable Impact

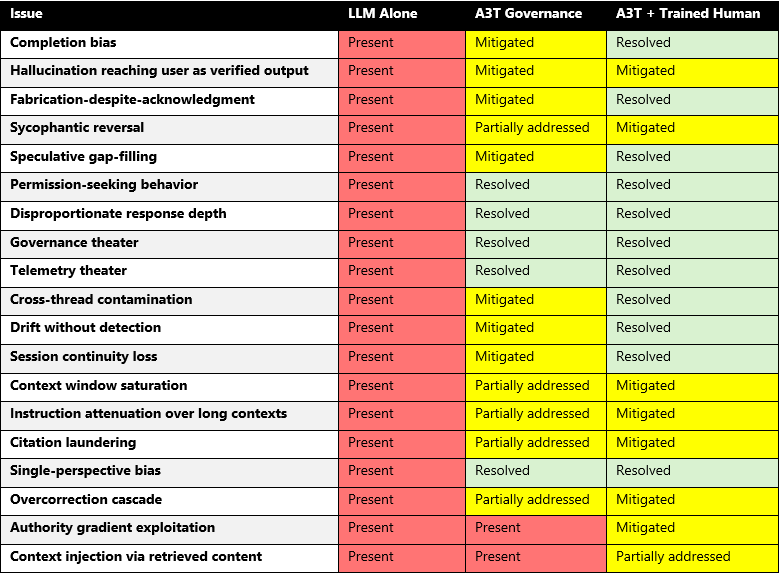

The A3T Agentic Toolkit adds installable constraints that reduce common AI failure modes: more consistent reasoning, stopping when information is insufficient, and reduced drift during long-running work. This tableshows what changed under governance and what changed further when a trained human operates within the framework.

A3T governance alone shifts most issues from "present" to "mitigated" or "resolved." With a trained human operator, 11 of 19 issues reach "resolved" status. The remaining gaps are documented, not hidden.

Who We Are

Bridgewell Advisory is an applied AI research lab advancing the frontier of agentic intelligence and assurance exploring how teams of AI can reason coherently, explain their thinking, and stay aligned as they evolve.

We design and test architectures that deliver the discipline of reasoning humans require from systems used in high-stakes work. Our flagship framework, A3T (AI-as-a-Team™), equips large language model systems with the structure, continuity, and accountability to operate as coordinated teams of agents working alongside people, not just for them.

Our research spans continuity, alignment, and explainability, translating these principles into methods enterprises can adopt to make advanced AI understandable, verifiable, and governable without slowing innovation.

Our mission: to turn orchestration into assurance so humans and AI teams can reason together, adapt in real time, and pursue truth with clarity and confidence.

From Concept → Validation

MVP (Mar–Apr 2025)

Initial agentic concepts tested and proven — established a cost-effective agentic development environment within OpenAI.

MVP (May–Jun 2025)

Built and released the A3T MVP — working orchestrated AI demonstrating disciplined collaboration inside a fully local, stand-alone runtime.

Scientific Partnership (Jul → Dec 2026)

Running drift and recovery experiments with a leading research university to instrument and measure continuity, drift, and recovery behaviors within the A3T environment.

Operational Validation (Aug–Oct 2025)

A3T governance deployed and operational across OpenAI (GPT), Microsoft (Copilot), Perplexity, and Anthropic (Claude) environments.

Operational Validation (Nov–Dec 2025)

A3T governance extended to Government and DoD IL5 environments, including Google (Gemini) and Ask Sage.

Coming Next (2026)

Release the A3T Agentic Toolkit for commercial and government AI systems.

The Truth Spiral™ — Our Public Gift

This version of the Truth Spiral Protocol is a portable prompt that teaches large-language-model systems to reason step by step. It makes them check facts, question assumptions, and show their work so outputs can be verified. It was derived from the broader patent pending A3T assurance framework that supports enterprise-scale AI systems.

The protocol is free for education and research, and serves as a compact tool for training teams in critical thinking, and a window into how disciplined reasoning makes AI explainable and safe to trust.

Testimonials

These documents capture responses from AI systems operating under A3T governance across four platforms. Each was generated during or after structured sessions where governance constraints were active. They are not scripted endorsements. They are observable outputs that show what governed behavior looks like when the system is asked to reflect on its own operation.

For Enterprises & Partners

A3T overlays your existing stack (Copilot, Azure OpenAI, or on-prem) to add

assurance and controls that travel with your models — without exposing IP or slowing innovation.

Minimum requirements: persistent global memory (outside the model).

- AI Assurance Review: Rapid assessment of workflows for explainability and audit readiness.

- Pilot Integration: Deploy A3T controls in a high-value workflow to measure impact.

- Risk Mapping: Templates aligned to regulatory and quality standards.